Four streams of defects for prioritising bugfixes

How urgent is it to fix bugs? The question may sound ridiculous, but the answer isn't straightforward. Well, it depends on a bug, isn't it?

This is the adapted transcript of the talk I gave to my cat because all conferences had been cancelled, and my internet was too slow for online events.

How urgent is it to fix bugs?

The question may sound ridiculous, but the answer isn't straightforward. Well, it depends on a bug, isn't it?

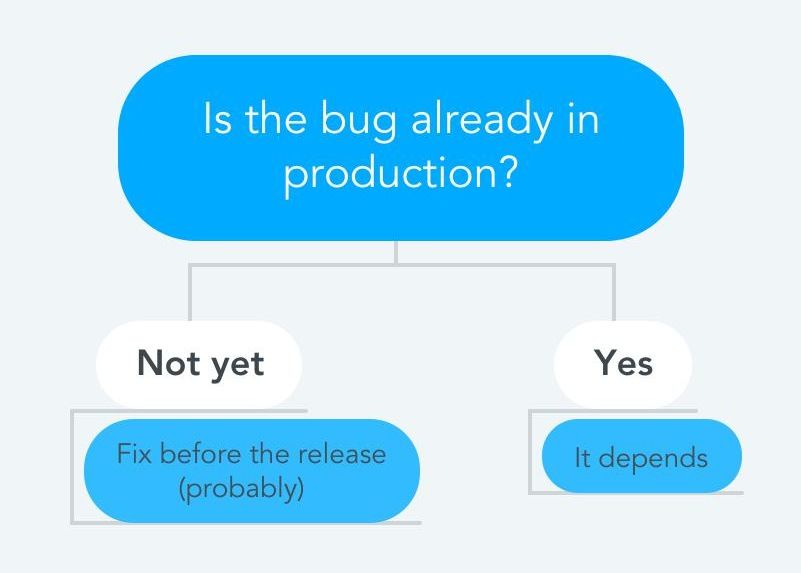

When it's not released yet, prioritisation is simple: we have to fix it before the release. Of course, there may be some exceptions, but it sounds like a reasonable rule for most of the situations.

What's about bugs which are already in production? What does worth fixing?

It's all about impact

Do you need to fix a leaking tap on the kitchen?

Usually, it depends on how lazy you are, but I'll make the decision a little bit easier by giving you more data:

- Your partner likes to study there, and the sound of dripping water makes it hard to concentrate

- The water bill was $40 higher this month

- Last time you left some mugs in the sink, and they blocked the drain — you were just lucky to notice it and prevent the flood

I think this information will help you make the right decision without hesitation.

Same with bugs.

As soon as you know the impact, prioritisation becomes much easier.

Four streams of defects

To prioritise effectively, you need to answer many questions:

- How many clients are affected?

- Which piece of functionality does work incorrectly?

- Are there other bugs we need to prioritise?

- When did it start happening?

- and so on

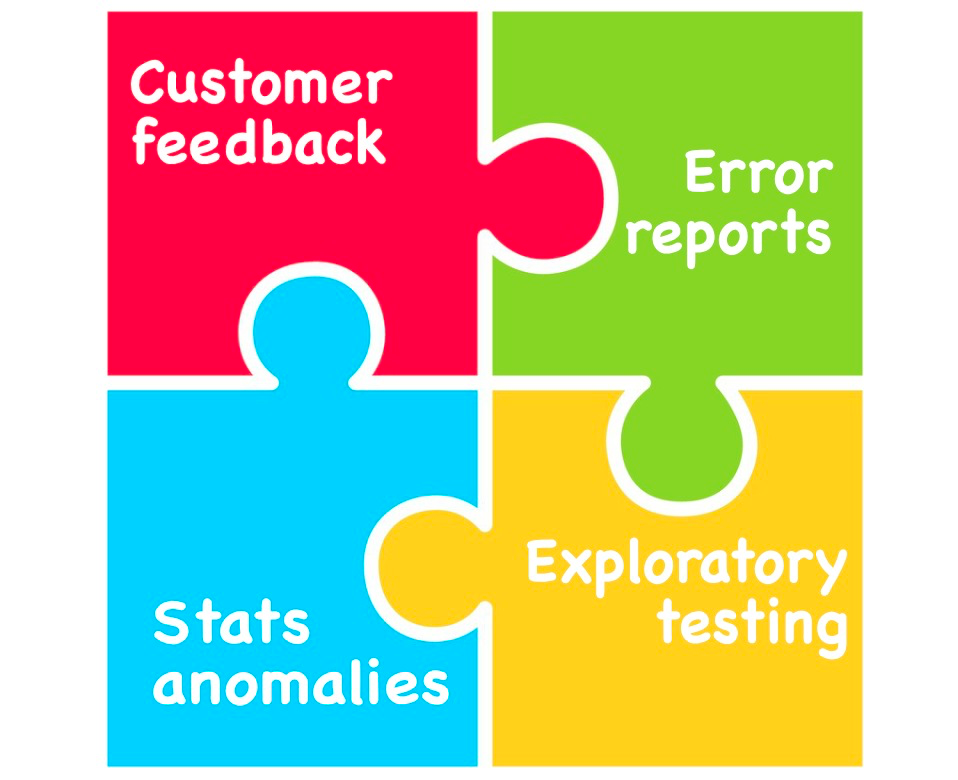

The way organisations deal with those questions depends on how they detect bugs. There are four big channels you can use to discover defects in your systems:

- Customer feedback

- Error reports

- Exploratory testing

- Stats anomalies

Most of the time, companies rely on only one or a couple of channels and neglect the rest – I'll show the consequences of this approach later. For now, let's talk about each of the four streams of defects and how we can make the most of it.

Customer feedback

That's a simple and the most ubiquitous way of finding bugs – to delegate it to your clients. They don't like something, and they complain – you just have to listen to it and schedule the work.

Unfortunately, a lot of companies stop there and use only this channel.

There is what it may lead to:

- Not all features are covered.

Your clients aren't going to complain if you stop showing ads by mistake. They won't notice if you break behavioural tracking either, which is the same for all non-customer-facing features of your product. - Number of complains as a prioritisation factor.

Some clients naturally report more issues than others, but does it make their reports more important? What about the people who gave up and stopped using your product? - Technical support becomes a bottleneck.

Triaging incoming requests is tedious work that requires time and coordination. If all you have is plain text messages from users – it's going to be hard. Just be prepared. - Hard to reason when it started happening.

The fact that the bug is reported today doesn't mean it's a recent one – it may have been there for years until it bothered this particular user.

How to use this channel effectively:

- Make sure clients can always send their feedback.

Offer multiple ways to reach out to you, especially, if you use third-party widgets for collecting feedback (such as Userback). Advertise your twitter account, email-support, anything that can be a backup communication channel. - Allow users to send you attachments.

Don't expect clients to send you proper bug reports using internal jargon. You may know that "☑️" is called a checkbox, but for them, it's going to be that thing or that thing that doesn't work. Allow users to send you screenshots, gifs and videos in any format – it'll save lots of time for both sides. - Collect as much metadata as possible.

Operating system version, browser type, screen resolution, locale, local time – everything that can help you reproduce the problem and is allowed by your privacy policy. This becomes even more important when clients report accessibility issues – they may have a non-trivial setup, but that doesn't make these errors any less important.

Error reports

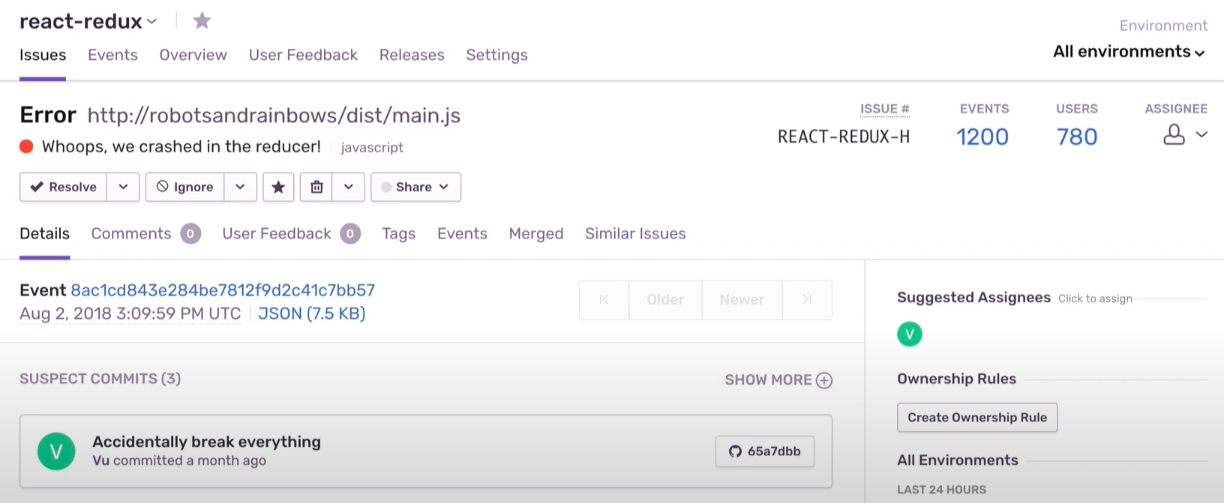

Time for the next channel – error reports. You detect an exception in your code and report it to Sentry or something similar.

It compliments client feedback by addressing its shortcomings:

- You have data for all features regardless of how important they are for users

- You can see the overall impact based on the number of exceptions, not the number of complaints

- These questions can be combined together to simplify technical support

- Some of the errors would correlate with releases and could be mitigated by rolling them back

As it may look like a perfect solution, it still has some downsides:

- Not every bug causes an exception.

Misaligned elements on your webpage may block important pieces of your workflow, but probably won't trigger an exception - Number of exceptions as a prioritisation factor.

Errors aren't equal, therefore prioritising bugs by a number of exceptions has the same issues as relying on the number of complaints. - Hard to reproduce.

Having an exact stack trace doesn't mean that it's going to be easy to fix, especially when an exception isn't originated in your business logic. Mysteriouscannot read property 'data' of undefinedcan appear out of nowhere and frighten all the stakeholders by how many of them you receive

How to use this channel effectively:

- Make sure you can easily locate all the recent changes.

It's important to react quickly when you start receiving a new type of reports. Usually, it means that you've recently released a bug — but when and where? The more moving parts you have, the more important it is to journal all the changes and be able to correlate them with first occurrences of a new type of exception - Setup SLAs for dealing with reports.

Data is useless if nobody looks at it proactively. Setup the procedures for categorising new errors so you won't miss anything important - Invest in traceability.

Where this incorrect request came from? How come the system reached this state? The more subsystems are involved in use cases, the harder it may be to answer those questions if your code isn't instrumented properly.

Exploratory testing

Does your company have people who continuously work to break your systems?

I won't be surprised if it doesn't. Unfortunately, many businesses cut costs on manual testing as they see it outmoded and redundant. Such a view is gaining popularity because manual testing is often perceived as repetitive execution of pre-defined scenarios. This type of work indeed has to be automated.

Exploratory testing, in contrary, is a process of breaking the system without predefined scenarios to find situations that developers didn't think about. When organised properly, it can cover the blind spots of Customer feedback and Error reports:

- Testers can find bugs in all types of features, even if they don't generate exceptions.

If the banners on your website aren't visible because of wrong CSS-styles, testers will catch it - Testers can find reproduction steps for mysterious exceptions.

If you vaguely know where the exception comes from, your QA team will stress-test that piece of the system in order to cause the same crash and find a way to do it reliably - Testers can test new features before you enable real clients.

The biggest downside of other streams of defect is that they only catch bugs which are already impacting clients. Regardless of how good your auto-tests are, there are still use-cases the engineers haven't thought of. QA-engineers with the right tooling and good experience can make new releases smoother

How to use this channel effectively:

- Invest in tools for testing.

Do your QA engineers have different environments for testing or they use the same setup for everything? Try to provide them with all the right tools: virtual machines, mobile devices, accessibility software - Automate as much as possible.

Obviously, it's a huge waste if smart testers have to manually do something that can be done by machine. If they need to perform the same actions every test cycle — automate it - Make sure they know how your system should behave.

In other words, document your features. Many teams rely on common sense and expect that testers will a bug when they see it. Unfortunately, it's not always the case: if a tester asks colleagues if something is a bug — it's a sign of unclear behaviour that should be documented. - Involve testers in prioritisation.

QA engineers should not only find a bug and create a ticket — they should have all the information to assess the damage and suggest a priority. Make sure they can detect if something is a new regression, know the relative impact of different features and have all the necessary information about past and planned releases.

Stats anomalies

Do you collect statistics? Page views, registrations, orders, clicks on advertisement? If yes, this channel can be the most important one for your business because it shows how bugs affect your key metrics.

You released a new version and number of registrations suddenly fell by 15% — having this data makes prioritisation much more straightforward.

Although, it may sound like a panacea, using only this channel can be not enough because:

- Anomalies don't necessarily correlate with your releases.

There are many factor which can affect your system, and you may need to dig deeper to understand how to fix it. - Stats may be impacted by various factors, not only bugs.

Anomalies can be caused by genuine reasons: weather, public holidays, major events — not all of them are bad, even if your metrics go down. - Changes aren't always rapid.

It's simple when you update business logic that lives on the server-side, preferably on one instance. You introduce a bug and the metrics immediately go down — easy to catch. When it comes to front-end, mobile applications or other systems with the longer rollout, the change isn't that sharp.

How to use this channel effectively:

- Make your dashboards as close to real-time as possible.

Data is much less useful when it comes with a big lag, especially if you have to wait for a Hadoop job to generate you a daily report (yes, that's a very frequent situation!) - Collect data about user environment.

Most of the major systems for collecting metrics allow sending metadata along with stats. It can give your QA engineers enough clues to reproduce the issue or can help you to figure out a genuine reason for the anomaly. - Setup alerts.

Use all capabilities of your system — many of them allow you to setup thresholds where something is considered an anomaly, so you don't need to monitor graphs yourself.

What is your score?

How many of those streams do you currently support? Do you rely on one of them or have multiple?

All four streams can give you a very good understanding of what's going on:

- Customer feedback — what happens?

- Error reports — how many users are affected? when did it start?

- Exploratory testing — how to reproduce?

- Stats anomalies — how does it impact business?

Then, prioritisation becomes much easier, as for the leaking tap from our previous example:

Situation 1:

All new clients are affected since we've released "XYZ" — nobody can register on our website.

It decreased the number of orders by 20%.

Solution: revert "XYZ"

Situation 2:

We had two complaints from the customers, but only people with IE11 are affected.

It started 3 weeks ago and haven't visibly impacted any of the key metrics yet.

Solution: won't fix, show a notification that we don't support IE 11.